Want to get into the DevOps sector?

Or

Are you a working professional, and your company is following DevOps techniques?

we are providing best DevOps classes in coimbatore our class is 100% practical oriented ,we teach advance devops in easy way.

No matter where you are, you will interact with DevOps tools in your daily work.

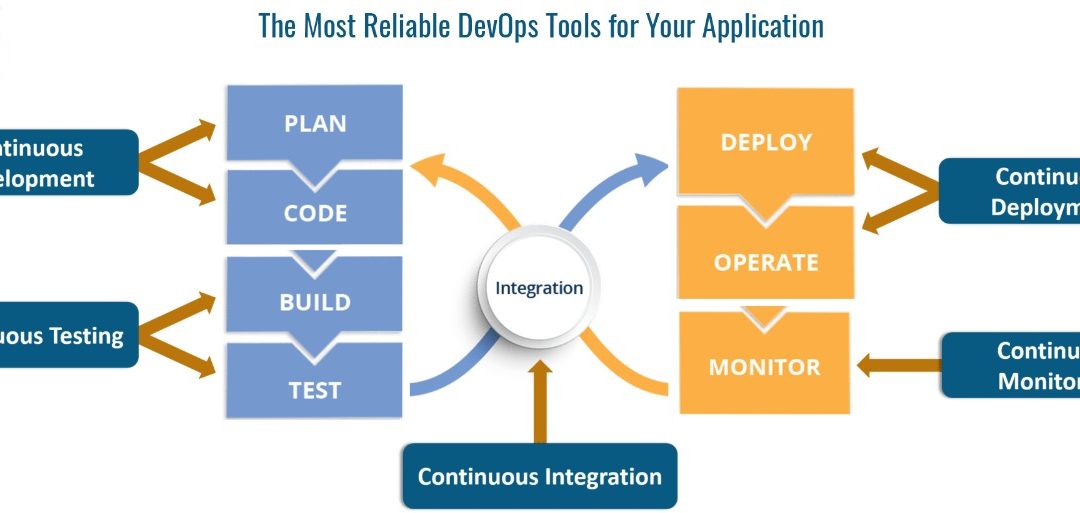

We set together a list of the successful DevOps tools that companies use throughout the application lifecycle. These DevOps tools can be used to test code from all stages of the application lifecycle, from application deployment to functionality, monitoring, and security.

The Most Reliable DevOps Tools for Your App Life Cycle

- Application Life Cycle: Code Writing

Source control

There is no longer any doubt. Everyone uses Git for version control of their source code. It is easy to learn basic commands like clone, commit, and push, but there are many more.

Resolve Conflicts?

Connect the squash and your branch to the master? What if you accidentally make a mistake? If you do not know the answer to these questions, you should proceed quickly. Any right DevOps Engineer can use these commands without hesitation.

Software development platform

The Git integrates with a software development platform such as GitHub, Azure, AWS, DevOps, CodeCommit and more. These platforms enable developers to collaborate on software, open Pull Requests (PR) and resolve disputes. You need to understand how to use these platforms. These will enable you to collaborate with your colleagues on your projects. Engineers who build deployment pipelines need to know how to integrate source control into a continuous distribution platform.

- Application life cycle: build and test

Continuous Delivery

Now that you know about the software distribution platform, it’s time to talk about continuous delivery. At this point, you are using your code as version control to create pipelines to build, test, and deploy your software. Software development platforms often provide tools within their platform to develop pipelines. If you are starting from the ground up, you will most likely use the built-in pipe function. It will be fully integrated into the place using integrations for source control, testing (building tool integrations), and deployment (for example, Kubernetes).

Apart from using your software development platform, the famous open-source tool Jenkins has already been around for some time. It is used in almost all companies. Jenkins can be used as a complete continuous distribution platform, handling the build, test, and deployment phases. It can be integrated into existing sites. For example, AWS CodeBuild can build and test your software. Still, you also have the option to integrate with Jenkins and allow Jenkins to handle the configuration, testing, and deployment phase.

Sparkdatabox is one of the best Professional courses with job placement They offer Oracle Database, Java, Apache Tomcat, SQL and other courses.

- Application Life Cycle: Deployment

Continuous deployment

As part of a continuous supply cycle, you can continuously deploy your applications. Today you usually use containers, so let’s cover this first.

To sort containers, you require a container orchestration platform. One of the most popular is the Kubernetes. It is available as a hosted platform on virtually all public cloud computing providers. While Kubernetes can do a lot for you, it is a complex tool with its own ecosystem. If you are searching for something more manageable, take a look at what cloud vendors offer. AWS implements an Elastic Container Service (ECS). ECS is a container orchestrator, but very simple to use. Other useful tools to create and test your containers locally are the Docker-compose and Docker Swarm – a Docker orchestrator made by Docker.

As you build, test, and deploy your pipeline, you will see that Kubernetes has many platforms out of the box. Azure DevOps owns integrations for their own Kubernetes service, and Azure Kubernetes Service (AKS), which allows you to extend your code in Kubernetes using Azure DevOps easily.

Deploying Docker

The container has many advantages. You begin with writing a Docker file that contains information on how to create your Docker image. This Docker file is the only file you require to create your container. You can create and run containers on your own workstation otherwise control it as a portion of your continuous delivery process. Once it is created, it can be run on any Docker orchestrator like Kubernetes.

Containers will be isolated at the kernel level. As an outcome, containers start up faster than a virtual machine (VM). Isolation covers network, resource, and process isolation. That means you can run multiple containers on one machine without port or resource disputes.

Usually, the choice of containers and Docker, in particular, has made it easy to sort reuse and control containers within your environment. Kubernetes will handle resource utilization, planning, scalability, security, and managing the network while keeping you focused on what is happening in the container. Appreciations to the availability of Kubernetes as a primary service in public cloud providers, you can practice these containers wherever you want. The only alert is that you still have to get access to your data, so in fact, the cross-cloud utilization approach is not as simple as you might expect.

Sorting without Docker

Not using Docker is still a choice. Continuous delivery was already in place before Docker came into action. Creating a virtual machine (VM) image rather than a container image is the right approach here. The same strategy for containers still suits, but the artifact is different: a virtual machine image, preferably to a container. Packer is an open-source tool that you can utilize to create these images. Packer encourages VMware for on-premise deployments but also helps cloud providers such as AWS. The process of creating a VM image takes a lot of time, but the end result is the same. You need to plan your images around in your infrastructure, and for that, you need orchestration tools.

A unique tool for distribution here is Spinnaker. This is a tool developed by Netflix to make changes to the constantly growing infrastructure. In Spinnaker, you can start creating these images, integrate them with Jenkins for build, test, and use them in your cloud infrastructure.